If you’ve worked in the professional world for any amount of time, you’ve likely learned that change is the only certain thing in business—from product changes to website redesigns to large-scale organizational changes. The UX research process can evolve and adapt to change, however doing so often requires UX professionals to think outside of the box to make or keep UX relevant and valuable in a constantly changing business landscape.

Since my arrival at T-Mobile in 2010, the company has been through three CEOs, three major company reorganizations, several minor reorganizations, a failed purchase attempt by AT&T, and countless other rumors of potential mergers. I have also participated in countless product roadmap changes, website and app redesigns, rebranding exercises, and target customer shifts. What sounds like a dismal situation isn’t unique to T-Mobile; organizational change is a reality in the business world, and while it can often be jarring, it may be necessary and positive. We as professionals are expected to adapt if we want to thrive—despite our changing circumstances.

Needless to say, throughout all the organizational changes, our UX research strategy had to change right along with everything else.

The “Ideal” UX Situation?

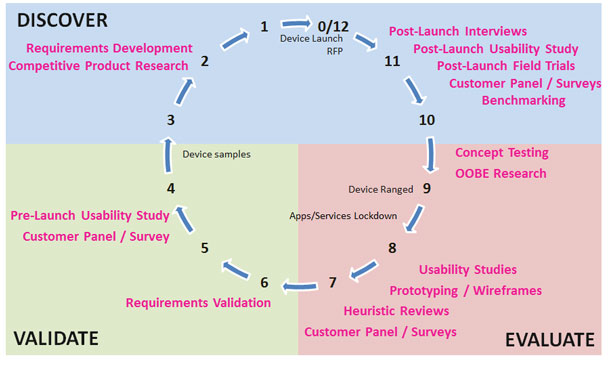

When I started at T-Mobile, we had a very well-established UX research process (see Figure 1). We had a large, 12-person research team that focused on device UI as well as T-Mobile apps and services. For devices, we did early concept research, wireframe reviews, multiple rounds of usability testing throughout the 9-12 month development process, and enforced device compliance within UX requirements before launch. In doing so, we influenced the interface design on many high profile T-Mobile devices.

UX Research Evolution

When the company started moving away from UI enhancements and customizations on devices, there was less need for UX on the device side of the house. Our focus changed from customizing device UIs to the essential web, apps, and services that our T-Mobile customers use on a regular basis. In addition, our large 12-person research team became a small research team of two. We had to adapt our existing research process in Figure 1 to a new team with a new focus, new stakeholders with little prior exposure to UX research, and existing processes that didn’t allow much room for research. We had no choice but to adapt, streamline, and embrace new opportunities.

Opportunity: Agile usability implemented to match new development processes

We came into an organization that had a well-established Agile development process. Agile and traditional usability testing aren’t always the most complementary. Over the course of three weeks or more, our traditional usability testing often involved at least one week of prep, 10-12 participants over 2-3 days of testing, and a detailed report a week later. We were faced with the challenge of fitting ourselves into a three week sprint cycle that currently didn’t include any user research. Challenge accepted!

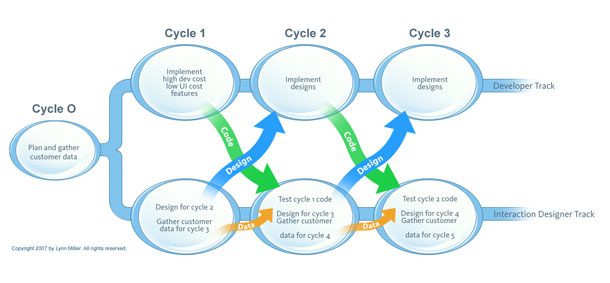

We adapted our existing research process in Figure 1 to the development process we came into, which forced us to be innovative and think outside the box. We created an “Agile-friendly” usability process that aligned with the existing sprint cycles. The goal was to identify major usability issues and recommendations where applicable, and iterate and test again three weeks later as needed. We set aside one day every three weeks for an in-person usability testing session with five participants (and that’s it).

Participants were recruited via an external agency. Since the topic(s) weren’t known too far in advance, we used a fairly broad screener based on the company’s general target consumer. We held a planning meeting the week prior to the session and any interested stakeholders could attend and bring their test topics to the table. We were usually able to accommodate all the requests for that session, usually 2-3 different topic/project areas. If there was a conflict, the lowest priority topic shifted to the next session. In the week between the planning meeting and the session, we worked with stakeholders to gather materials and create the test plan. Fidelity of materials varied from functional code to clickable wireframe or design prototypes to paper comps—whatever was ready for that session. After the session, our goal was to have a topline summary report and meet with stakeholders the following day. There were no long reports, no video highlight clips, and no spreadsheets to track recommendations.

We were concerned that the team would be skeptical since they previously had no user research; instead, they had the opposite reaction. They were so excited to finally have access to user feedback. In this case, change was good! After almost a year, this Agile usability method is now a regular, relied-upon method for the team. We have identified many critical issues that would never have been discovered previously and that would have resulted in abandonment or users calling customer service. In many cases, our new method has replaced the need for more standard usability studies since the team has a consistent venue for getting customer feedback and is able to make changes iteratively.

Agile research has also enabled us to rethink our usability reports and documentation. Our quick, next-day, topline summaries and in-person reviews with stakeholders are more effective than the traditional, lengthy, “thrown over the fence” reports. Immediately following the sessions (sometimes during the sessions with stakeholders present), we empty our heads of key findings from the study. Often this involves a whiteboard or printout of the flow with handwritten callouts for specific problem areas. We then translate our list into a few slides with screenshots, bulleted findings, and sketched recommendations, if needed. Instead of poring through videos and detailed notes, we capture the core issues and share them with stakeholders quickly so they can begin implementing the changes. In doing so, we have drastically reduced the amount of time spent on post-study analysis and report writing.

Lessons Learned: Strategies for Adapting to Organizational Change

Our Agile usability is just one example of how I have embraced and adapted to change in my career. There are several other examples—and key takeaways—from my experiences with organizational change over the past few years. When faced with change, it is important to know how to make the most of it using the following strategies so it becomes a positive, growth-producing experience.

Strategy 1: Accept that change is inevitable.

It sounds cliché, but you will experience a certain amount of change in your career. Changes can be big like what we experienced—new teams, limited resources, new processes, new managers, or new products. Or they can be smaller—shifts in priorities, schedules, or designs. All will affect your work; it’s just a matter of if and how you adapt. You have to learn to adjust to whatever circumstance you find yourself in. Having that flexibility is the key to your success as a researcher and successfully having research adopted and incorporated into the process.

Make the most of change! We implemented a more efficient process that allowed us to execute research and report findings more quickly. But if it hadn’t worked we would have tried something else…as should you! Question the status quo and figure out how to modify it to work for your situation, your team, and your products.

Strategy 2: Make your value known.

If you find yourself on a new team or with a new manager who doesn’t necessarily know the value of UX research, let them know who you are and what you can offer. It is up to you to evangelize yourself and educate people about the value you can provide. Start small! When we moved to an entirely new organization, we met with our key stakeholders, explained to them what we could do, and asked them what questions they had for T-Mobile users. Then we created a research plan to answer those questions.

To broadly expose others to our services, we presented at an all-hands meeting and showcased our past work by creating a shared, searchable, online repository of our research reports. If your company is more numbers-focused, try sharing the number of studies you have conducted, the number of high priority findings you have uncovered, improvements between versions of a product, or how research has been used to inform business decisions. The key is getting yourself out there and establishing yourself as the subject matter expert about your users.

Strategy 3: Invite yourself to the table.

As a researcher, you have to be proactive; you can’t expect others to include you in the process. Ask to be included from the beginning. Often stakeholders (even designers) will bring research in only if there is extra time at the end. Ask to be invited to design reviews, or just go. Stakeholders often have upfront questions for users; they just don’t have a good way to get the answers or don’t think about them until you’re in the room. If they know research is available, it’s much easier for them to get real data to make decisions. Make yourself an indispensable resource!

It is also essential that you share your findings effectively. You cannot just throw reports over the fence and expect findings to magically fix themselves. Keep your reports short and sweet—no one wants to read a lengthy report (and they won’t). We make it a point to have brief reports by the next day; if we anticipate it taking longer we’ll send, at minimum, a summary email or slide of the main issues to give stakeholders something to start with. Consider what is most effective for your team, be it in-person reviews or session recaps (which work very well if stakeholders attended the sessions), prioritized recommendation lists, or some other format.

Lastly, post-study follow-up is crucial. Engage with the designers as they are making usability fixes. Invite yourself to post-study design reviews. Check in with product managers about if and how they’re using the results. Don’t let your work slip through the cracks—it’s ok to be a pest!

Strategy 4: Adapt yourself to the process. Don’t expect the process to adapt to you.

If you join a team with well-established processes, they may not be willing to change anything to fit you in. In our example, we shifted our traditional usability process (3-week minimum turnaround, 10-12 participants, detailed reports) to accommodate a team that wanted user feedback but had limited time in their schedule. Our new Agile usability method (1-day study, five participants, brief reports) proved to the team that they could incorporate user research into their process. Now the team is willing to set aside time for a dedicated, more traditional usability study if/when the need arises. If you can prove your value within existing processes, people will be much more willing to fit in research in the future.

Embrace Change

While it can be frustrating and hard initially, organizational change can often be a good thing. Change has forced us to explore new methods, which in turn has helped our team expand and improved our skillset, making us better researchers and more valued team members. Embrace changes as they occur and make the most of them, and if the first thing doesn’t work, try something else. When faced with large or small business changes, don’t be afraid to invite yourself to the table and insert yourself into existing processes to prove how UX research can be relevant and valuable for your organization.

Text from Figure 1: Research process throughout 12-month device development lifecycle.

10-12 months before launch (previous device launches, RFP): Post-launch interviews, post-launch usability study, post-launch field trials, customer panel/surveys, benchmarking

7-9 months before launch (device is ranged, apps and services locked down): Concept testing, OOBE research, usability studies, prototyping/wireframes, heuristic reviews, customer panel/surveys

4-6 months before launch (device samples provided): Requirements validation, pre-launch usability study, customer panel/surveys

1-3 months before launch: Requirements definition, competitive evaluation

[/greybox]

Text from Figure 2: UX in Agile Product Development

Cycle 0: Plan and gather customer data

Cycle 1: Developers implement high dev cost/low UI cost features/UX designs for cycle 2, gathers customer data for cycle 3

Cycle 2: Developers implement designs from cycle 1/UX tests cycle 1 code, designs for cycle 3, gathers customer data for cycle 4

Cycle 3: Developers implement designs from cycle 2/UX tests cycle 2 code, designs for cycle 4, gathers customer data for cycle 5

[/greybox]

变化是不可避免的。作为一名用户体验研究人员,您在工作过程中总会经历各种大小变化。为了应对大规模的商业变化,德国电信 (T-Mobile) 不断调整自己的用户体验研究流程。在适应变化的过程中,您可以利用以下几项关键策略来与变化相适应:提高知名度,亲自进行体验,简化研究的执行工作,以及让自己适应流程(而不是期望流程适应您)。

변화는 불가피합니다. 변화의 크기와 관계없이, 당신은 UX 연구자로서 업무상 변화를 경험할 것입니다. T-Mobile은 대규모 비즈니스 변화에 대응하여 UX 리서치 프로세서를 개선하였습니다. 변화에 적응할 때, 당신이 맥락을 유지하기 위해 사용할 수 있는 몇 가지 주요 전략이 있습니다. 프로세스가 당신에게 적응하기를 기대하는 대신, 자신이 먼저 프로세스에 다가가서 타협점을 찾고, 리서치 실행을 효율화하여 프로세스에 자신을 적응시키십시오.

A mudança é inevitável. Grande ou pequena, você sentirá a mudança em seu trabalho como pesquisador de experiência do usuário. A T-Mobile desenvolveu seu processo de pesquisa de experiência do usuário em resposta às mudanças comerciais de larga escala. Durante a adaptação à mudança, existem várias estratégias importantes que você pode utilizar para manter-se relevante: tornar-se conhecido, envolver-se, otimizar a execução de sua pesquisa e adaptar-se ao processo em vez de esperar que o processo se adapte a você.

O artigo completo está disponível somente em inglês.

変革は避けられるものではない。UX調査者は、その仕事の中で、大小様々な変革を経験することになるだろう。T-Mobileは、大規模なビジネスの変革に対応するために、UXリサーチのプロセスを進化させた。変革に適応するにあたっては、後れを取らないための重要な戦略がいくつかある。すなわち、UX調査者としての自分の存在を認めてもらうこと、機会を逃さないこと、リサーチの実行方法を合理化すること、そして、プロセスが自分に適応するのを期待するのではなく自分自身をプロセスに適応させることである。

El cambio es inevitable. Independientemente de si es un gran cambio o uno pequeño, experimentará transformaciones en su trabajo como investigador de experiencia de usuario. T-Mobile desarrolló su proceso de investigación de experiencia de usuario en respuesta a cambios comerciales de gran escala. Existen varias estrategias clave que puede utilizar para mantenerse relevante mientras se adapta al cambio: dese a conocer, invítese a la mesa, optimice la ejecución de su investigación y adáptese al proceso en lugar de esperar que este se adapte a usted.

La versión completa de este artículo está sólo disponible en inglés