It’s tempting to think of closed captioning as a rote, strictly objective task. Captioners copy down what people are saying, a task so easy, even a computer can do it. For example, Google uses speech recognition technology to fully automate the captioning of YouTube videos. The results of autocaptioning are far from perfect, but the basic assumption behind this effort is that automation is possible because captioning is nothing more than mechanical transcription.

In a number of definitions, the act of captioning takes a back-seat to the technology that allows the words to be displayed on the screen. For example, Merriam-Webster’s definition of “closed captioned” reduces captioning to a technology of display: “having written words that appear on the screen to describe what is being said for people who do not hear well and that can only be seen if you are using a special device.” In this definition, what’s notable about captioning is not the process that creates those words, but the technology that displays them on the screen.

However, I’ve come to discover that captioning—the process of making multimedia content accessible to deaf and hard-of-hearing viewers—can be highly complex and deeply interpretative. Moving from sound to writing involves a number of decisions about which sounds to caption and how to caption them. While captioning can be simple and straightforward under ideal conditions (for example, a single speaker in a quiet room who clearly articulates every word at a normal volume and speed), the ideal gives way quickly to the real, messy world of YouTube videos, TV shows, and movies. In this world, sounds accumulate, overlap, and compete with one another: multiple and overlapping speakers, indistinct and indecipherable speech sounds, environmental and ambient noises, background music and music lyrics, speaker dialects and other pronunciation variances (for example, impersonation), differences in location (close versus distant sounds), and differences in volume (loud versus quiet sounds). Some sounds are discrete, such as a phone that rings once, and others are sustained, like the persistent low hum that provides ambience on the engineering deck in Star Trek: The Next Generation. Some speech sounds have clear referents; we can see who is speaking. Other speech sounds originate off-screen by unknown speakers, requiring speaker identifiers in the captions.

Four Principles of Closed Captioning

Not every sound and quality can be captioned. A lot of information must be squeezed into a small space for readers who can only be expected to read so quickly. (For reading speed guidelines which tell captioners when they should consider editing verbatim speech, see the Described and Captioned Media Program’s 2011 “Captioning Key” page at www.dcmp.org). Captioning is not simply about pulling out the most important sounds—foreground speech usually being the most important in a scene—but interpreting the sonic landscape and the intentions of the content creators. Ambient sounds, indistinct or background speech, music, and paralinguistic sounds such as laughs and sighs can be captioned in any number of ways, and the captioner must decide which sounds to caption and how. In my forthcoming book on closed captioning, Reading Sounds, I offer four principles of closed captioning that move us towards a broader, more complex view of captioning:

- Every sound cannot be closed captioned.

- Captioners must decide which sounds are significant.

- Captioners must negotiate the meaning of the text.

- Captions are interpretations.

This perspective privileges context, audience, and purpose over simple transcription and automation. It also recognizes the very human, interpretative work that captioners do when they invent words, or turn to boilerplate captions such as [indistinct chatter] for sounds that are ambiguous.

Quality captioning is a personal issue for me. My younger son, now sixteen, was born deaf. Years before I ever considered writing about closed captioning, I was a regular, daily viewer of closed captioned media. Today, I rely on closed captions to provide a level of access I can’t quite seem to reach without them. There’s something about “reading a movie”—experiencing it through the rhetorical transcription of its soundtrack—that is more satisfying to me than listening alone. Because captions clarify sounds, I don’t have to strain to make out foreign terms or strange names, such as “flobberworm” (and a host of other strange terms) in Harry Potter and the Prisoner of Azkaban (2004). Because captions formalize speech by presenting it most often in standard English, I can read and understand the text quickly, even if some of the embodied aspects of that speech are scrubbed clean or squeezed into manner of speaking identifiers such as [in silly voice], [heavily accented], or [drunken slurring]. Because captions tend to equalize sounds by making all sounds equally “loud,” I can quickly decode quiet or background sounds (at least those that are captioned). These effects change the text in complex ways, creating a new text and a different experience of the film or TV show. These differences are missed when we equate captioning with transcription or assume that it is a simple practice to be automated.

While closed captioning is primarily intended for viewers who are deaf or hard-of-hearing, it benefits diverse audiences: children learning to read, college students searching captioned lectures by keyword, older adults who don’t hear as well as they used to, night owls who don’t want to wake sleeping partners, restaurant patrons watching a muted TV from across the room, and people with other disabilities. These benefits are consistent with the claims of universal design.

To better understand the complexity of closed captioning and its highly-interpretative product, let’s explore examples from the two films The Artist and Knight and Day, and the long-running animated TV show Futurama.

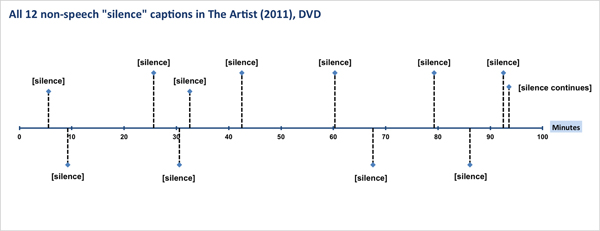

The Artist and Captioned Silences

Closed captioning must account for the assumptions we make about how sounds are made. Because we assume that objects interacting in space, and people moving their lips, usually make noise or speech, the captions need to tell us when our assumptions are thwarted. Sometimes, this requires the captioning of silences, including mouthed words. The Artist (2011), a Best Picture Academy Award winner produced in the style of a silent film and set in the period just before and after the advent of talkies, includes twelve non-speech “silence” captions, eleven of which are [silence] and one is [silence continues]. Interestingly, the word “silence” appears only four times in the official screenplay by director Michael Haszanavicius. In other words, silence is not poured into the captions from the script but invented in the captions.

| Start Time | Closed Caption | Movie Chapter |

|---|---|---|

| 0:05:31 | [silence] | 1 |

| 0:09:14 | [silence] | 2 |

| 0:25:40 | [silence] | 4 |

| 0:30:35 | [silence] | 5 |

| 0:32:35 | [silence] | 5 |

| 0:42:31 | [silence] | 7 |

| 1:00:19 | [silence] | 9 |

| 1:07:35 | [silence] | 10 |

| 1:19:16 | [silence] | 11 |

| 1:26:08 | [silence] | 12 |

| 1:32:29 | [silence] | 13 |

| 1:33:35 | [silence continues] | 13 |

The Hypnotoad and the Importance of Context

Recurring characters and sounds on long-running TV shows also provide an opportunity to explore the subjective, interpretative nature of closed captioning. Take, for example, the Hypnotoad, a minor character on the animated TV show Futurama (see the first video clip for more information). A couple of things become clear right away: 1) the same non-speech sound can be captioned a number of different ways, even when the context is held fairly constant, and 2) captions show a lack of consistency or “series awareness” across episodes. Even the same sound in the same episode may be captioned differently on TV and DVD. Most importantly, the Hypnotoad captions provide a dramatic example of how context controls interpretation. The sound the Hypnotoad makes is reportedly a “turbine engine played backwards” but the visual context determines that the captions will be driven by the sound’s function and not its source.

In every case, the closed captions reveal how the apparent origin of the sound the Hypnotoad makes (the Hypnotoad produces or controls the sound) trumps its actual origin (a turbine engine actually produces the sound). For example, when the Hypno “sound” is captioned as [Eyeballs Thrumming Loudly] in Season 4, Episode 6 (“Bender Should Not Be Allowed on Television,” 2004), the caption reinforces the visual context for the sound. The sound apparently emanates from the toad’s eyes, so that’s how the sound is captioned. It’s not surprising that sound in film and TV shows would direct us towards constructed as opposed to real causes. What’s of interest here is that captioners don’t agree on the cause and nature of the Hypnosound.

The Hypnotoad captions raise a number of questions for caption studies about the best way to present the sound to caption readers (shown in Table 2):

| Futurama Episode or Movie | Hypnotoad Caption (as seen on screen) | Source |

|---|---|---|

| “The Day the Earth Stood Stupid” (2001) | [Electronic Humming Sound] [Electronic Humming] |

Netflix and DVD |

| [Low Humming] None |

None Broadcast TV | |

| “Bender Should Not Be Allowed on Television” (2004) |

[Eyeballs Thrumming Loudly ] | Netflix and DVD |

| (sustained electrical buzzing ) | Broadcast TV | |

| Bender’s Big Score (2007 DVD movie) | (droning mechanical sputtering) | Netflix |

| (Mechanical Grinding) | DVD | |

| “Everybody Loves Hypnotoad” (DVD special feature on (Bender’s Big Score) | None | DVD |

| (Into the Wild Green Yonder (2009 DVD movie) | None | Netflix and DVD |

| “Rebirth” (2010) | (LOUD BUZZING DRONE) | Netflix and DVD |

| (deep, distorted electronic tones blaring) | Broadcast TV | |

| “Attack of the Killer App” (2010) | None | Netflix and DVD |

| (mechanical humming) | Broadcast TV | |

| “Lrrreconcilable Ndndifferences” (2010) | None | Netflix and DVD |

| (electronic static) | Broadcast TV |

Which action verb is best? Which of the verb+ing options captures the hypnotic function of the Hypnosound: humming, thrumming, buzzing, droning, sputtering, or grinding? Are the present participles mostly synonymous or does one option suggest a greater degree of hypnosis?

Should the sound be captioned or not? The same sound in the same episode may or not be captioned (compare the TV versus DVD versions of the same episode). It depends on whether the captioner has identified the sound as significant.

Should the sound be identified as loud or not? Even though every instance of the Hypnosound except one is roughly the same volume, the captions identify the sound as loud in three instances: [Eyeballs Thrumming Loudly], [LOUD BUZZING DRONE], and [deep, distorted electronic tones blaring].

Where does the sound originate? The nature of the sound is described as either electronic, mechanical, or biological (for example, emanating from the eyeballs). Every caption, except perhaps for [LOUD BUZZING DRONE] describes the sound using one of these three options, with electronic/electric used four times (five if you add the second occurrence of electronic in the same caption file for “The Day the Earth Stood Stupid”). Mechanical is used three times. The biological option appears once and only in an implied form. But the biological option is perhaps the most compelling for caption studies, for only in [Eyeballs Thrumming Loudly] does the sound find a specific location in or around the toad. These different options cannot simply be chalked up to the nature of the sound or what the producers of the show have said about the sound (because, as far as I can determine, the producers have given no indication of how the Hypnosound should be described or where it comes from).

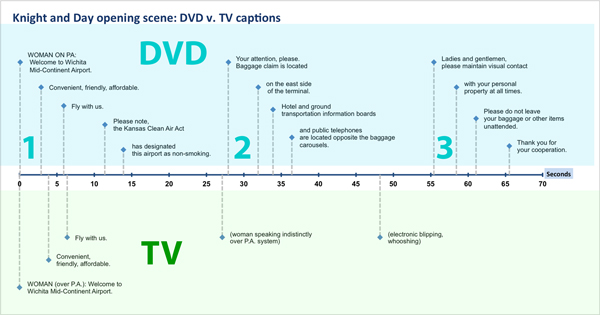

Knight and Day: A Film with Radically Divergent Interpretations

Knight and Day (2010) stars Tom Cruise and Cameron Diaz with a supporting cast of car chases and gunfire. What matters here are the radically divergent interpretations made by the DVD and TV captioners in the opening scene. At the heart of these differences is the question of what to do about the ambient noise, specifically the background music and mostly indecipherable public announcement (PA) in the airport.

The TV captions come closer to approximating the experience of the airport PA as ambient noise (rather than distinct speech), but they are no less partial, selective, or incomplete than their DVD counterparts. When the two streams are visualized on the same timeline, we see a number of differences along with one similarity. Both the TV and DVD clips begin with the same three basic captions, which are also the loudest and clearest portions of the PA announcement. After that, all bets are off.

The DVD captions interpret the scene exclusively through the mostly inaudible speech of the PA, relying on thirteen speech captions from the PA announcement, even though the PA can’t be heard clearly. The PA is intended to provide ambience or background noise for the airport scene, but captioning these sounds verbatim transforms them into foreground sounds. The captioned speech in the DVD version falls roughly into three clusters (0-14 seconds, 28-36 seconds, and 55-66 seconds). If the DVD captions exaggerate the prominence of the PA announcement, the TV captions adopt a minimalist approach.

After the first three PA captions, two non-speech captions round out the scene on TV, one of which appropriately characterizes the PA speech as indistinct, while the other describes the arcade game sounds. Interestingly, neither set of captions accounts for the music, even though the music provides the controlling mood for the scene, which begins on the beat of Cruise’s first step and reaches a crescendo when it draws the two main stars together at the end of the clip.

These two contrasting interpretations of the soundscape reflect the deeply subjective and even arbitrary nature of closed captioning. Two caption readers, one viewing the TV version and the other the DVD, will experience two fundamentally different texts.

Why Captioning Matters

Sound is complex. Captioning is an art. We can’t leave the task to auto-transcription programs or untrained captioners. We do a disservice to those who depend on quality captioning when we assume that captioning is simple enough to be treated as an afterthought. But when we explore captioning as a complex, curiously rich text, we raise the profile of accessibility and suggest, in a small way, that something as potentially intricate as closed captioning deserves to play a more central role in our usability studies and multimedia projects.UX

[bluebox]

More Reading

The Artist movie script by Michel Hazanavicius is available on imsdb.

“Hypnotoad” entry on Futurama Wiki.

“Presentation Rate,” The Described and Captioned Media Program.

“Which Sounds Are Significant? Towards a Rhetoric of Closed Captioning,” SeanZdenek, Disability Studies Quarterly 31.3.

[/bluebox]