Figure 1: Day 203/365 Dog Food (Credit: Anne Hornyak, Wikimedia Commons).

Unicorn Nation

I recently ran an online poll to learn more about the perceived roles of user experience professionals and how those roles are adapting in our ever-evolving technological landscape. The poll simply asked participants how many considered themselves pure researchers, designers, primarily one or the other, or the rare and mythical “unicorn” that does all of these roles.

To my surprise, 65% of the respondents considered themselves unicorns, that is, equally adept at both research and design. A further 20% described themselves as primarily one role (mostly designers) who also perform the other role. Only 15% described themselves as pure researchers or pure designers. It was astonishing that over 85% of the respondents viewed themselves as having a skill set that industry considers mythologically rare.

Figure 2: Unicorn Purifying Water (Credit: Jesse Waugh, Wikimedia Commons).

Figure 2: Unicorn Purifying Water (Credit: Jesse Waugh, Wikimedia Commons).

By “design” and “research,” I refer to the roles specifically, not individual UX designers or researchers. In this article, I use “design” to refer to either a UX designer or a UX unicorn acting in the design role.

Simultaneously, we have seen an uptick in the decline in the effectiveness of UX efforts. Many managers and practitioners express a desire for greater depth in their UX research and design efforts. They feel a need to bring more rigor to their research findings and interactions beyond finding standards issues and surface user interface issues. The Agile process has not helped: It has been notoriously difficult on the user experience process and on research in particular because it provides less time for design cycles and reduces solid user research.

Is there something in the current environment that leads us to lose rigor and depth in our UX process? How might this trend toward the expectation of UX generalists and unicorns relate to the decline in depth of practice?

Purpose

In more than 25 years as a UX’er, I’ve had the privilege of working alongside some of the best researchers, designers, and unicorns across major domains in finance, utilities, pharma, manufacturing, and military. I’ve observed and learned the mindsets and attitudes that go into making a world-class user experience. But more importantly for this article, I’ve had the opportunity to personally work as a formally trained researcher and as a designer, and I understand the tasks and mindset involved in both areas. This article is an attempt to articulate what I perceive as the vital elements differentiating these roles and the areas in which performing both might result in cognitive dissonance or performance degradation.

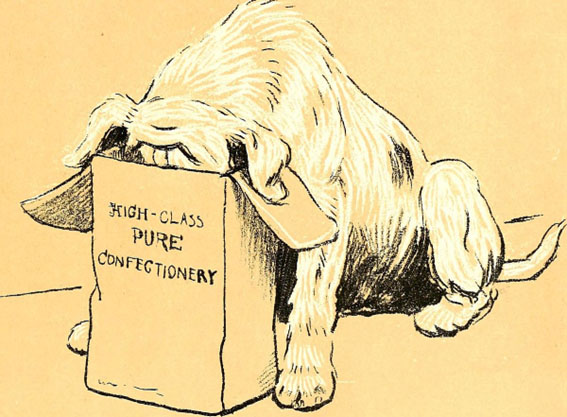

Figure 3: Walter Emanuel, A Dog Day, Wikimedia Commons.

Figure 3: Walter Emanuel, A Dog Day, Wikimedia Commons.

Eating Our Own Dog Food

Consider that one of the main goals in UX is the analysis of others’ workflows, tasks, and goals. I find it a bit ironic that we are so bad at using our own tools to examine our own jobs and “eat our own dog food.” To the best of my knowledge, there is no research showing the increase in effectiveness of a blended skillset or a Goals, Operators, Methods, and Selection (GOMS) analysis.

Metacognitive Processes

For the purpose of this article, I am interested in very high-level thought processes of the researcher, designer, and the unicorn. How do the different roles approach a problem, what cognitive tools do they use, and what is their outlook on life?

Why is that? Because any conflicts between these roles won’t be found at the actual task level. By that, I mean the actual tasks of the UX practitioner can and have been taught and carried out for years. But just as a mechanical engineer might view the world through a different lens than an artist, the tasks we are asked to perform and the cognitive tools we have available to do those tasks shape our thinking and approach to a problem. That particular perspective is what I focus on in this paper because I believe it has a significant influence on how we approach UX problems.

Mindset and Cognitive Dissonance

Let’s start by looking at the differences in the metacognitive process in research versus design, by which I mean either a pure researcher, pure designer, or the unicorn who must “mode switch” while performing the different activities. As you read, keep in mind that mode switching is really the key in understanding any performance degradation that might occur from a blended skillset.

Concept Definition: Certainty versus Uncertainty

Managing certainty and uncertainty are the prime drivers in concept definition. If we had 100% design certainty, there would be no need for research. Having 100% design uncertainty would prevent a design from starting. So, the concept definition will move back and forth between these two states. But how do the UX roles approach concept definition differently?

On the research side, think about any of the great researchers: Einstein, Watson, Crick, and Hawking. Their work explored multiple competing hypotheses simultaneously while attempting to rule out as many as possible with hard data. The risk for any serious researcher is that you rule out a hypothesis unnecessarily, invalidating your work. Your goal, then, is to keep the competing hypotheses viable until you have undeniable proof to the contrary. You employ certain tools that were designed to manage that uncertainty: the Analysis of Variance (ANOVA) Test, null hypotheses, and scientific methodology, etc. In this way, uncertainty is the economy of trade for the researcher.

Contrast that with the task at hand for the designer. The artist Michelangelo was quoted as saying: “The sculpture is already complete within the marble block, before I start my work. It is already there, I just have to chisel away the superfluous material.”

That is to say, Michelangelo held an image in his mind that drove him toward the goal of the finished artifact. This is a great quote which demonstrates the need for certainty in design in which experimentation becomes the enemy because you can’t put the marble back on the sculpture once it is removed. Time pressures and deadlines replace marble in the modern world of software development. The degrees of freedom become even tighter with the constraints of screen sizes, UI standards, and user expectations which must be followed.

Observation 1: In this respect, I submit that certainty becomes the friend of the designer, but the enemy of the researcher.

Artifact Progression: Constructive versus Deconstructive

This construct refers to whether or not the steps contributed by the role move a design toward the current perceived finished goal. For instance, the designer places objects on the artifact toward their best guess as to what the final design should look like, based on the best information they have at the time. This initial design is most likely not the optimal or final design, but the steps taken progress the design towards the goal.

The pull of research is in the opposite direction; that is, the recommendations provided by research will generate anything from no change in the best case to radical redesigns in the worst case. Of course, these recommendations lead to a better and hopefully optimal design, but the fact is that these recommendations contribute to re-works that are necessary to optimize the final design.

Please don’t interpret this construct as saying that research isn’t contributing anything positive to the project or that it is holding it back. On the contrary, it ensures the right design gets built; think of this in terms of the tension in the flow toward a target design. Looking at it from this perspective shows that there are opposing goals between the design and research roles.

Observation 2: There is a tension created by the contributions of design and research artifact progression.

System Trust: Confidence versus Skepticism

Related to artifact progression, as a constructive activity, design must have a foundation of confidence as a force that moves the project forward. This confidence is drawn from a trust in mental models, experience, and design standards that guide toward a viable finished product. Yes, there are reworks in the concept, adjustments, and redesigns, etc. And yes, confidence in research validates a concept. But the very act of placing an object on the screen is one of belief in the process that it will produce a viable final design. Without this design confidence, the designer would either constantly restart from scratch, work multiple simultaneous designs, or just get frustrated and seek employment elsewhere.

On the other hand, research by its very nature is a skeptical activity, created specifically to challenge the norm. In fact, the Merriam-Webster dictionary defines research as “…revision of accepted theories or laws in light of new facts.” Research’s role is to test and prove each assumption in order to ensure they are correct. It is true that many usability issues found are a result of standards lapses, but even these types of known issues have to be evaluated by the researcher against the dynamic context of the user workflow.

Observation 3: Trust and skepticism are diametrically opposed. You can’t do both simultaneously.

Hypothesis Ownership: Invested versus Impartial

This construct speaks to how an individual approaches or manages design hypotheses.

Have you ever paused and considered exactly what is being tested during research? Screens, artifacts, and placement of the features, you might think. But that is not quite it, is it? The screens, features, and artifacts we create are really a communication medium, a visual language for communicating ideas and concepts. Research is about testing concepts and mental models, more so than pixels. The arguments about a particular design always fall into this category: “We need to add all 50 items to the menu because our users want to see everything all at once.” The hypotheses about the user mental model then generate an artifact to be translated from pure mental model to artifact feature, which is then tested.

This is the point at which testing bias is introduced. If a designer researches their own design, they have full knowledge of the competing mental models (and have undoubtedly chosen a favorite). It is this favorite which has a well-documented advantage over their competitors. This was driven home during a strategy meeting when I heard a designer say, “I need to do my own research so I can back up a position I’ve taken,” one of the clearest declarations of testing bias I’ve heard. J.J. Knowles wrote The Scourge of the Leading Question which speaks to how bias can affect results; for this reason, research must be conducted with a tabula rasa, a blank slate toward these competing hypotheses.

How It Applies

I recently observed a usability test administered by a designer who was doing his own research in which I heard the following question: “Would you like X feature there so you can do Y? Or not.”

There were a dozen or so leading questions like this during the session. You might have heard similar innocuous questions like this during tests you have been involved with or you might have even thrown in a few yourself (Molich et al. wrote a great best practice article on this topic). The research savvy have no doubt already identified it as a close-ended, somewhat thinly disguised dichotomous question, but we must dig a little deeper than that. This question is a great springboard to demonstrate our constructs in action.

- Concept Definition—Certainty/Uncertainty: This question drives at the heart of the certainty/uncertainty construct. The designer seemed uncomfortable with the uncertainty behind an open-ended question, so they framed the question in very concrete terms.

- Artifact Progression—Constructive/Deconstructive: Likewise, providing the “guard rails” of a very concrete question, the designer steers the project in a clearly constructive direction. Asking something more open-ended might bring the whole page or concept into question, a much more deconstructive activity.

- System Trust—Confidence/Skepticism: Many of these types of guard rail questions emerge from an over-reliance (or over-trust) in the design system. Asking this type of guided, dichotomous question ensures that the answers given are answered in the context of the existing design system being employed. The open-ended approach might produce an answer that challenges the thinking of the design system such as by introducing a concept from a different design system.

- Hypothesis Ownership—Invested/Impartial: This is a prime example that shows how design bias is introduced into a design, and it is probably the biggest issue people bring up when talking about blended roles. I was not privy to the discussions leading up to this test, but my guess is that the designer is fully invested in the “yes” answer. Note the imbalance in the word count in the question, “Would you like X feature there so you can do Y? Or not.” Eleven words are weighted toward the “yes” answer, and just two toward the negative.

Conclusion

To bring these metacognitive constructs together, I’d like to remind the gentle reader that the goal of this article is to identify sources that might suppress efficiency in your UX process. Given the pervasiveness of the blended skillset, it would defy logic to say that it can’t be done or that the practice should stop. Simply identifying these sources is the first step in bringing your UX practice to the next level.

The issues we’re trying to solve when we combine the roles of design and research reside at the metacognitive level, the deepest level of cognition, where planning, strategies, motives, and even life views live. A pure researcher will use a particular set of metacognitive constructs, and a pure designer will use a different set. Combining these two roles, in which one switches back and forth between design and research, sets these metacognitive processes at odds with each other. It goes much deeper than mode switching in which you can just “turn on your research mindset” and forget about your design mindset (or vice versa). You can physically do the activities you’re required to do for each role, but you won’t do them as well as someone in a dedicated role.

Bill Schmidt has been privileged to work a wide range of fascinating projects in the 25 years he has been a user experience professional, including aerospace, Department of Defense, utilities, and Fortune 100 ecommerce sites. He is currently working for NISC, a collective developing software for utility grids and telecommunications.